In this exclusive preview, ahead of his headline spot at the Digital Transformation Expo (DTX) tomorrow, Wednesday April 29, Howard Marshall, the Former Deputy Assistant Director at FBI’s Cyber Division, discusses new cyber threats and how artificial intelligence and machine learning can be used to help tackle them.

For a long time, cyber conflict was treated as a separate issue to physical conflicts. A parallel battleground, which was disruptive, but rarely life-threatening.

However, today, the lines between cyber and physical are blurring and we are frequently seeing physical tensions escalate in the digital domain.

The modern threat landscape is shaped by a constant stream of low-level cyber activity between nation states. Espionage, reconnaissance, intellectual property theft. It happens every day, but largely out of sight.

While there may be no formal declaration of conflict, in cyberspace hostilities are always underway.

And while there fortunately appears to be an unwritten line that most actors are reluctant to cross, the capability to do so is very real. That is what makes the current moment so uneasy.

Because the question is no longer whether cyber can cause physical harm. It is when, and under what circumstances, this actually happens.

We have already seen glimpses of what that looks like.

The NotPetya attack, initially targeted at Ukraine, quickly spread far beyond its intended scope. It disrupted organisations across the world, including critical services. In one case, it forced a major hospital to shut down systems and divert patients. No one was seriously injured, but they could have been.

As we saw with NotPetya, one of the uncomfortable truths about modern cyber incidents is that they are rarely contained. Attacks designed for one target can cascade unpredictably across interconnected systems.

And these systems increasingly underpin essential services.

Healthcare, energy, manufacturing, transport. All are now digitally enabled and connected. Yet, in many cases, security was not the primary consideration when those systems were designed.

This creates a new kind of risk.

It is no longer just about data loss or financial damage. It is about operational disruption in environments where failure has real-world societal consequences.

This is where AI becomes critical.

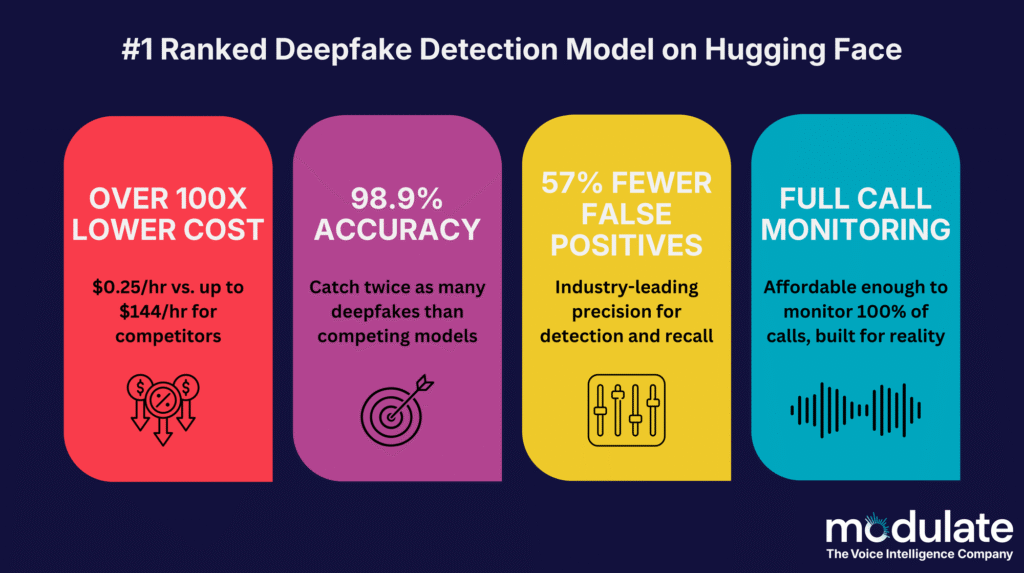

As attacks scale in speed and complexity, AI is already being used to automate social engineering, accelerate intrusion techniques and increase the volume of malicious activity.

But it also offers defenders a way to respond.

When used effectively, AI can reduce detection times, surface anomalies earlier and process data at a scale no human team could match. It has the potential to shift security from reactive to proactive.

However, it is not a silver bullet. AI is only as strong as the data behind it and still requires human judgement. Blind reliance introduces new risks, from flawed outputs to misplaced trust in automated decisions.

The real value lies in how it is applied. Combining human expertise with machine speed. Enhancing visibility, not replacing it. Embedding it across the full lifecycle of risk, from development through to deployment.

Cyber geopolitical conflict is no longer theoretical. It is already shaping the world we operate in, impacting organisations directly and indirectly, via methods few are prepared for.

The organisations that understand how AI can support their defences in this new realm of attack activity will be best placed to withstand what comes next.

Howard Marshall, Former Deputy Assistant Director at FBI’s Cyber Division, is headlining DTX Manchesteron 29 – 30 April 2026.

Howard will speak on the Main Stage for a session discussing ‘Forge the AI hype: How we use AI & ML to stop cyber crime’.

Join him on Wednesday April 29 – 10:00AM – 10:45AM

For more cybersecurity news, click here